- Category:Social listening

Learn how to track brand mentions in AI search with Talkwalker and gain visibility into how AI tools shape your brand perception.

- Category:Social listening

From the halftime show to brand performance, Super Bowl LX generated massive online engagement. Explore the social listening insights that reveal what resonated most with global audiences.

Browse all our blogs

- Category:Consumer intelligence

How to use social listening for consumer insights in 2026

Learn how social listening delivers real-time consumer insights that surveys miss — and how to track sentiment, trends, and demographics in Talkwalker.

- Category:Social listening

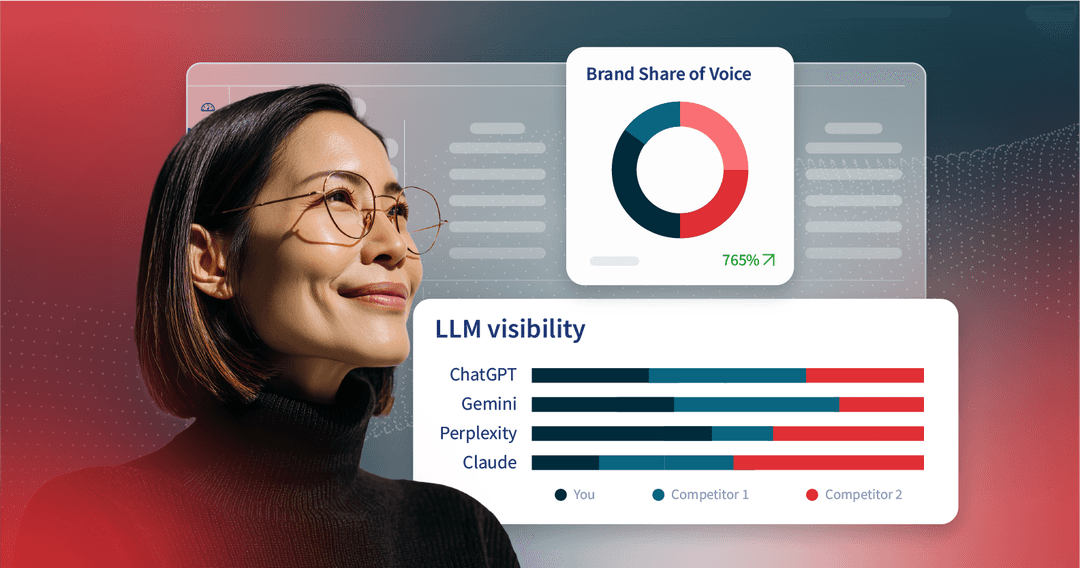

How to track brand mentions in AI search with Talkwalker

Learn how to track brand mentions in AI search with Talkwalker and gain visibility into how AI tools shape your brand perception.

- Category:Social listening

What Super Bowl LX audiences actually talked about (Social listening data)

From the halftime show to brand performance, Super Bowl LX generated massive online engagement. Explore the social listening insights that reveal what resonated most with global audiences.

- Category:Social listening

How to use social search to build visibility and protect your brand

Social media search is the new discovery engine. Learn how to show up across social networks and online forums using advanced tactics and social listening to grow visibility and manage reputation.

- Category:Trend insights

How to track and forecast social media trends in 2026

Stay ahead of social media trends in 2026. Learn how to spot early signals, track trend velocity, and forecast what’s next before trends peak.

- Category:Consumer intelligence

What is SOCMINT? Definitions, tools, and tips

Learn how social media intelligence uses public data to support security, cyber defense, crisis response, and disinformation detection.

- Category:Social listening

The state of agentic AI in marketing (2026)

Discover how agentic AI in marketing is redefining the future: autonomous insights, faster decisions, trusted data, and the breakthrough workflows reshaping every marketing team in 2026.

- Category:Social listening and monitoring tools

How to use AI agents to uncover marketing insights in 2026

Explore how AI agents help marketers turn massive datasets into instant insights, automate research and reporting, and unlock faster, smarter decision-making across the entire marketing ecosystem.

- Category:Crisis management

7 social media crisis examples (and tips for speedy mitigation)

Learn how to prevent and manage a social media crisis with real examples and tactical tips for protecting your brand reputation.

- Category:Trend insights

Why The Life of a Showgirl is a cultural moment

Taylor Swift’s The Life of a Showgirl is more than an album—it’s a cultural moment. See how her launch strategy lit up millions of conversations.

- Category:Social media analytics

8 successful social media campaigns + tips for tracking success

The best social media campaign examples from 2025 — and what they teach brands about virality, creativity, and results.

- Category:Competitive analysis

Competitive intelligence: How to turn data into an advantage

Learn how to use competitive intelligence to uncover market trends, analyze competitors, and turn public data into strategic insights that give your brand an edge.

- Category:Trend insights

What drove online conversations at the IAA 2025?

From EV breakthroughs to trade tensions — explore what dominated the online conversation around IAA 2025, and what it says about the auto industry’s future.

- Category:

Beyond the runway: Insights from Fashion Week 2025

Fashion Week shows how viral moments, sentiment shifts, and social listening insights can turn trends into business impact — lessons every brand can use.

- Category:

How to run an audience analysis that will inform better marketing

Move beyond assumptions with audience analysis. Use data to uncover who your audience is and how to connect with them through smarter marketing strategies.

Unpack online conversations and outplay your competitors with our leading social listening and benchmarking.