Social listening

How to track brand mentions in AI search with Talkwalker

Learn how to track brand mentions in AI search with Talkwalker and gain visibility into how AI tools shape your brand perception.

March 10, 2026

If you want to know what AI tools are saying about your company, you need to understand how to track brand mentions in AI search.

From product comparisons to recommendation lists, AI answers are shaping your brand narrative before buyers ever reach your website.

What “brand mentions” mean in AI search

Brand mentions in AI search are how your company appears inside an AI-generated answer. Instead of sending users to a list of links like traditional Google search, AI search engines (like ChatGPT or Gemini) generate a short summary that explains who you are, what you offer, and how you compare to others.

When someone asks an AI system for recommendations, comparisons, product FAQs, or guidance related to your niche, the model pulls from articles, reviews, social media posts, and other content marketing materials at the same time.

Here’s how this differs from traditional search:

Traditional brand mention | AI search brand mention |

Single article or post | Blended summary from many sources |

Clear author and context | Limited or compressed context |

Easy to trace | Hard to deconstruct |

Measured by volume | Measured by narrative framing |

Research shows that 36% of generative AI users have already replaced traditional search engines with AI assistants, and one in four use them for shopping and price comparisons. That means an AI summary about your business could be the first thing a potential customer sees.

In many cases, it influences decisions before anyone clicks through to your website. Research from Authoritas shows that click-through rates can fall by up to 80% on some queries when AI summaries appear in Google search results.

Summary: Understanding how AI creates these descriptions is the first step in learning how to track brand mentions in AI search effectively.

Why don’t traditional brand monitoring methods apply?

Traditional tracking tools were built for web pages, articles, and social media posts, but AI search does not work that way.

Traditional monitoring focuses on:

Mention volume

Reach

Sentiment scores

Share of voice

But AI search requires a different lens. You need to understand:

How your brand is described

Which strengths are repeated

Which weaknesses are emphasized

How competitors are framed next to you

Whether important differentiators are missing

In AI search, it’s not just about if you’re showing up but also how you’re being described. If you can’t see how AI systems are summarizing your brand, you can’t benchmark or manage how you’re being positioned.

Summary: Traditional monitoring measures how often your brand appears. AI search requires you to measure how your brand is described, framed, and positioned in AI-generated answers.

Why is manual prompting inconsistent and hard to scale?

Manual prompting is inconsistent and hard to scale because AI responses change all the time. LLM responses depend on how the question is phrased, which model you use, where you’re located, and even when you ask.

You might test one prompt and feel confident in your brand mention, but another question could tell a very different story.

Manual checks also don’t:

Track changes over time

Compare answers across AI models

Show patterns in tone or risk

Create reports you can share with leadership

Summary: Manual prompting gives you one-off answers, not a clear, repeatable view of AI brand awareness.

Why doesn’t citation tracking show the full picture?

Citation tracking doesn’t show the full picture because most people only read the summary, not the sources themselves. An AI answer might link to your website, but still describe your brand in a way that feels outdated or incomplete.

Citations don’t tell you:

How your brand is positioned

Whether the tone sounds positive or cautious

How you compare to competitors

What important details were left out

Summary: Citations may link to your product, while summaries still tell an outdated story about your brand.

Why don’t SEO and AEO tools govern AI perception?

SEO and AEO tools (like SEMRush and Ahrefs) are built to help your content get found. They focus on improving rankings, visibility, and performance in search results and SERPs. They are not designed to track how AI describes your brand over time.

Most SEO and AEO tools:

Aim to optimize search or prompt performance

Focus on keywords and content structure

Measure success through rankings, clicks, or traffic

They don’t show you what AI tools are actually saying about your brand. They don’t track how that description changes over time. And they don’t reveal whether the tone is positive, cautious, or critical.

In short, they help you try to influence answers — but they don’t help you monitor the answers themselves.

Summary: SEO and AEO monitoring tools improve visibility, but they don’t give leaders a clear view of how AI is defining their brand presence.

The metrics that matter in AI search

The metrics that matter in AI search are the patterns inside AI-generated answers, not just whether your brand appears. Instead of counting mentions, you need to understand how AI describes you, how it compares you to others, and what tone it uses.

Traditional listening metric | What to track in AI search instead |

Mention volume | Narrative themes |

Share of voice | Competitive framing |

Reach | Category positioning |

Sentiment score | Tone and confidence language |

Here’s what you should be paying attention to.

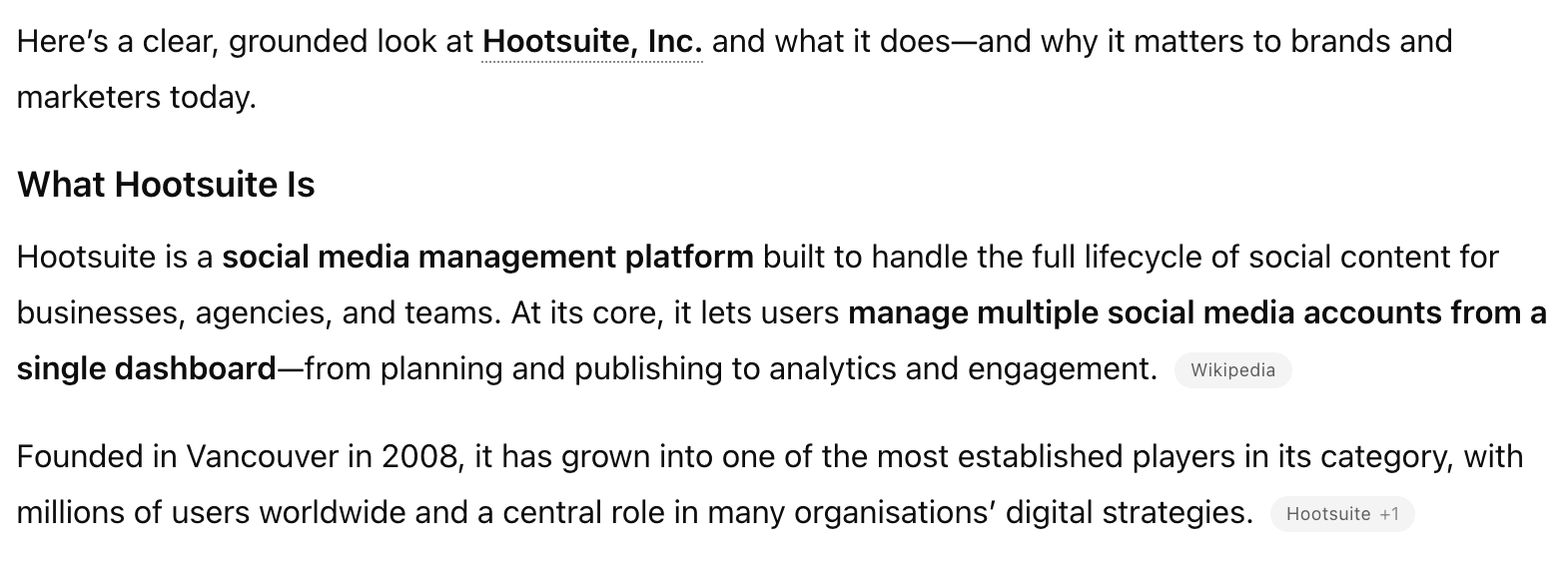

1. AI-generated narratives

AI-generated narratives are the recurring themes AI assistants use when describing your brand. Over time, these themes form a consistent story about who you are and what you offer.

For example, AI might repeatedly describe your company as enterprise-focused SaaS, unnaffordable, complex, innovative, or easy to use. When the same strengths and weaknesses show up across different queries, that becomes your AI narrative.

These narratives matter because buyers often treat them as neutral summaries. If AI consistently highlights certain features and leaves out others, that shapes how your brand is understood.

When reviewing AI-generated narratives, look for:

Repeated descriptions of your strengths

Repeated mentions of limitations

Features that are emphasized

Capabilities that are missing

Summary: AI-generated narratives reveal the consistent story AI tells about your brand.

Source: ChatGPT

2. Sentiment and tone of mentions

Sentiment and tone show how confident, cautious, or neutral AI sounds when presenting your brand. Even subtle wording can influence how trustworthy or risky your company feels.

An answer might clearly recommend you, mention trade-offs, or include soft warnings. Words like “leader,” “trusted,” or “popular” build confidence. Phrases like “may not suit larger teams” or “can be expensive” introduce hesitation.

Over time, tone patterns can signal a shift in perception. A change from confident language to cautious language should raise red flags.

When tracking sentiment and tone, pay attention to:

Whether the AI sounds confident recommending you

Whether caveats are emphasized

Whether competitors are framed more positively

Whether certain concerns are repeated

Summary: Sentiment and tone show how safe and credible your brand feels in AI answers.

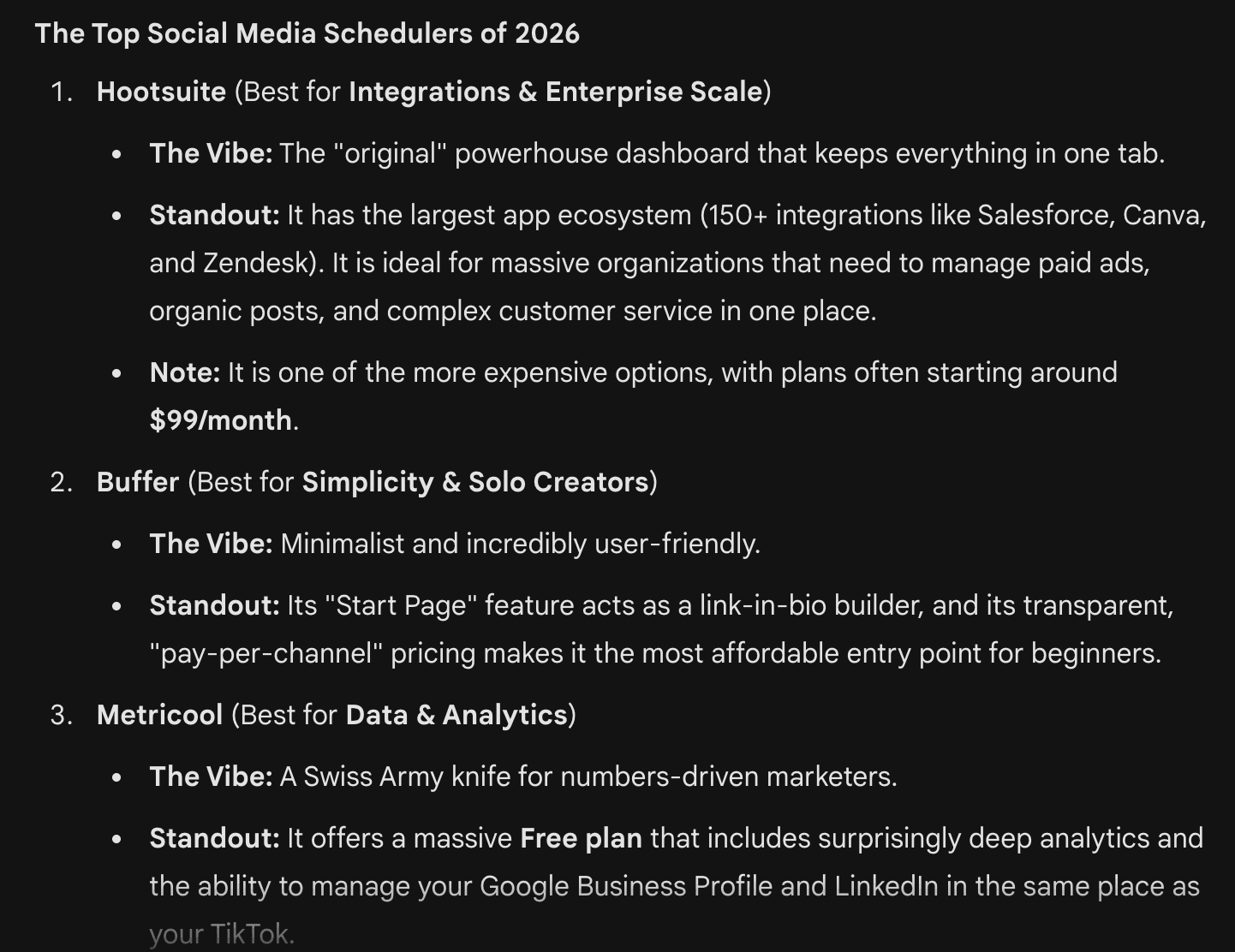

3. Competitive comparison patterns

Competitive comparison patterns show how AI groups and ranks brands in your category. They reveal who you are compared to and how you are differentiated.

When someone asks for the best tools in your space, AI typically provides a shortlist with brief summaries. The order of brands, the language used, and the reasons given for inclusion all shape perception.

It’s important to track:

Which competitors appear most often alongside you

How your strengths are described compared to theirs

Whether you are positioned as a leader, alternative, or niche option

Whether certain competitors are consistently framed as stronger in key areas

These patterns help you understand your AI-defined market position.

Source: Google Gemini

Summary: Competitive comparison patterns reveal how AI positions you within your market.

4. Category framing

Category framing is how AI defines your industry and what it says matters when choosing within it. That definition shapes how buyers see every brand in the space, including yours.

AI’s framing can influence:

What problems buyers think this category solves

What features feel essential

What outcomes feel impressive

What trade-offs seem acceptable

What type of buyer the category is “for”

What “high-quality” looks like in this space

If your strengths line up with the way AI defines the category, your positioning feels clear and credible. If they do not, buyers may struggle to see your value.

Summary: Category framing shapes how buyers judge your entire market, not just your brand.

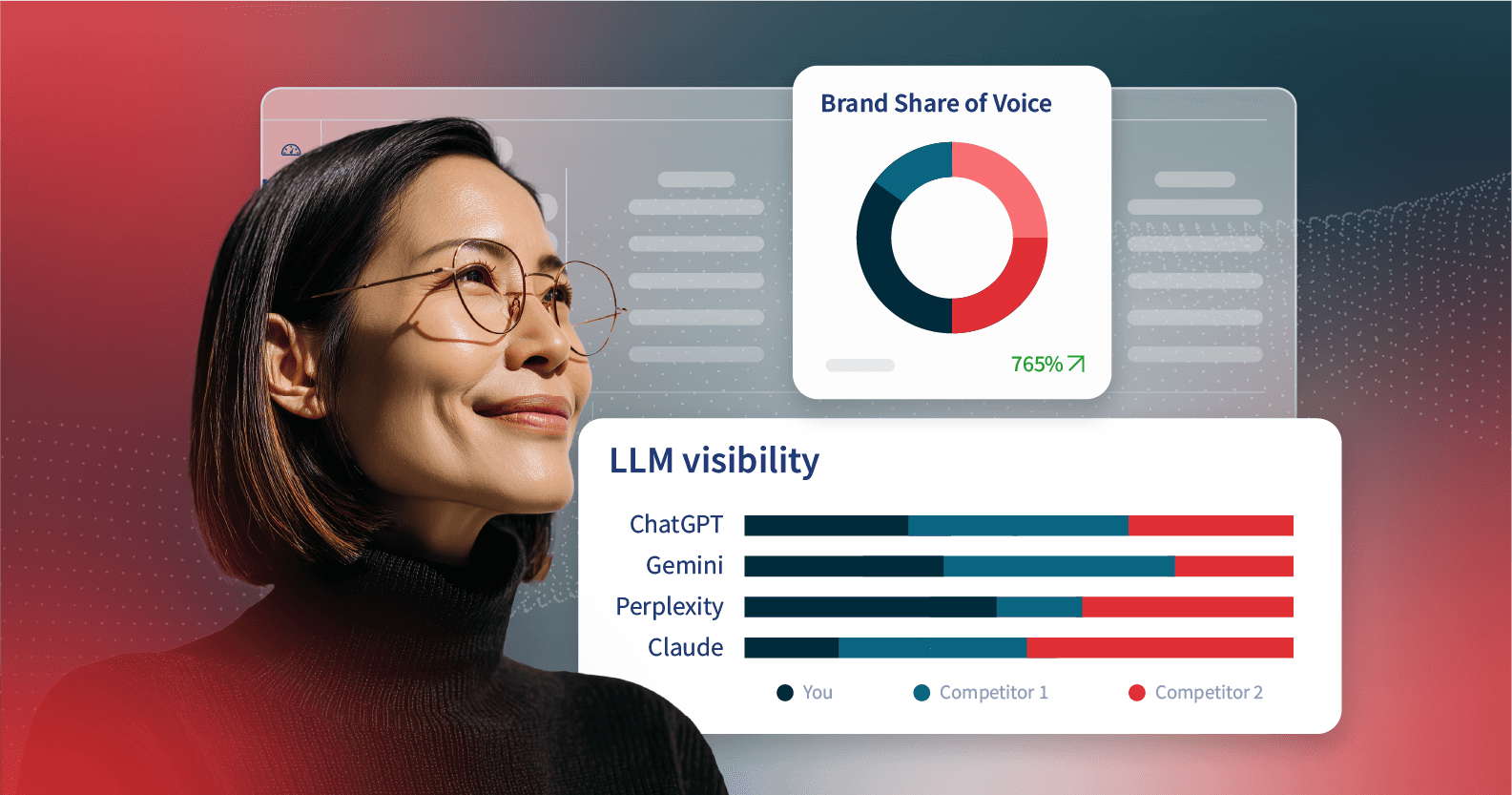

How to track brand mentions in AI search with LLM Insights

Ready to track your brand mentions with LLM Insights? Here’s how to get started with the tool, available in Talkwalker and as an add-on to Hootsuite Listening.

Quick setup overview:

Before you can view results, you need to create an LLM monitoring channel.

Go to Settings → LLM Channels

Click New channel

Add your brand prompts and monitoring goals

Select the AI models you want to track

How to read the LLM Insights dashboard

Once your LLM Channel is active, results begin populating in the LLM Insights dashboard. This dashboard shows how AI systems describe your brand, compare competitors, and frame your category.

Below is a breakdown of the main dashboard elements.

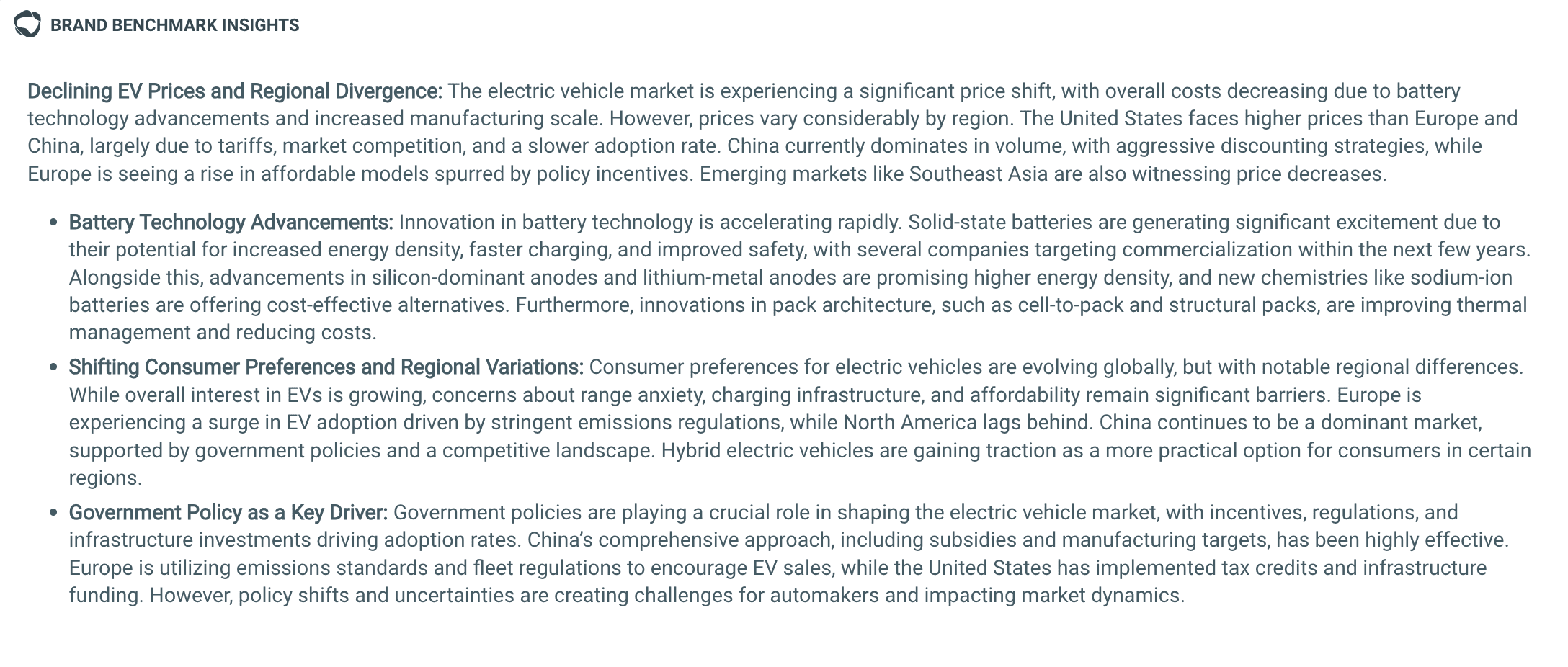

Brand benchmark insights

Brand benchmark insights summarizes the main themes AI models associate with your brand and category. It highlights recurring narratives in AI search results, such as consumer trends, product positioning, or public perception. Think of it like a simple snapshot of your overall LLM brand visibility.

Here’s what that looks like for “electric cars.”

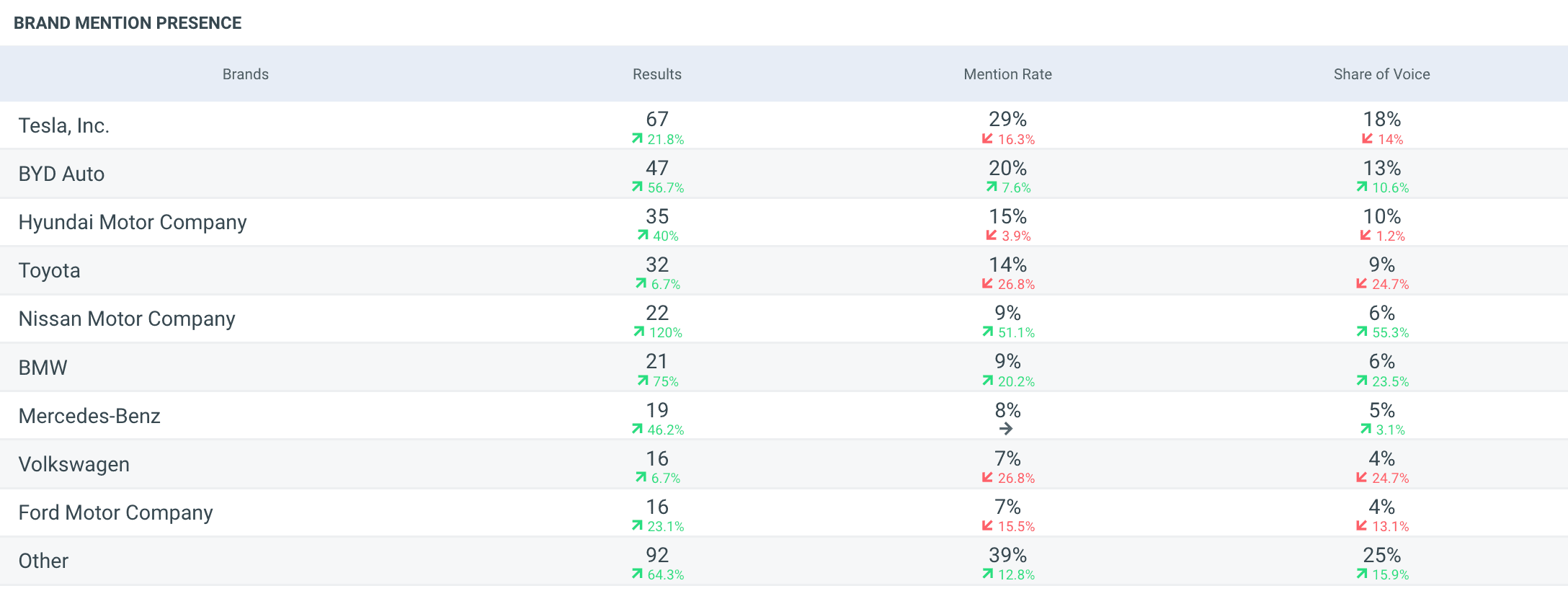

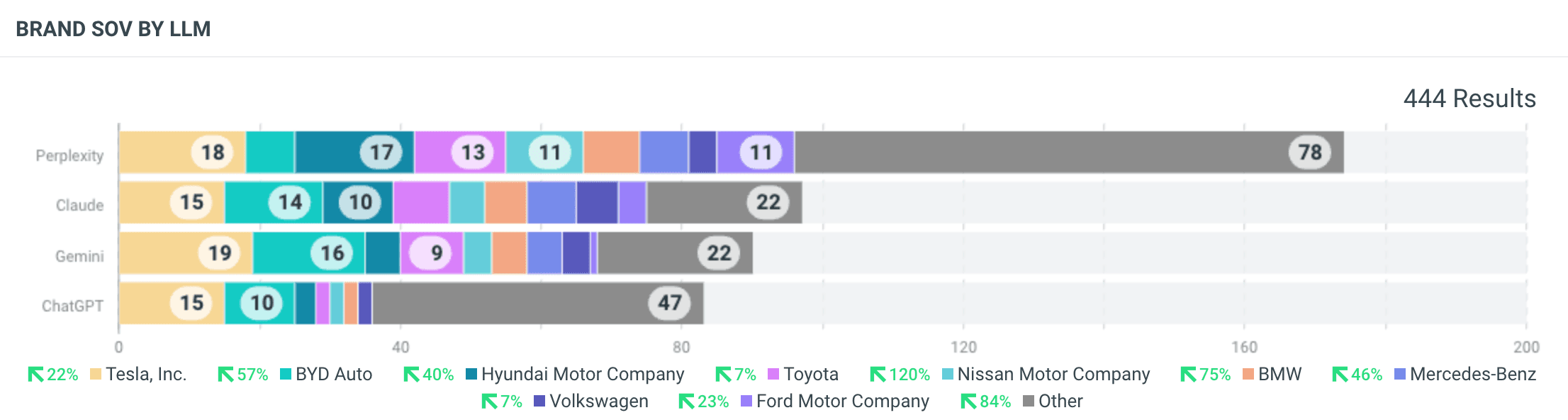

Brand mention presence

Brand mention presence shows how often each tracked brand appears in AI responses. It includes the number of results, mention rate, and share of voice, giving a quick view of which brands appear most frequently in AI-generated answers.

Brand

The Brand chart shows aggregated visibility across all major LLMs. It’s the simplest snapshot of how often each tracked brand appears in AI overviews and answers overall, giving you a quick read on who dominates the conversation across AI systems.

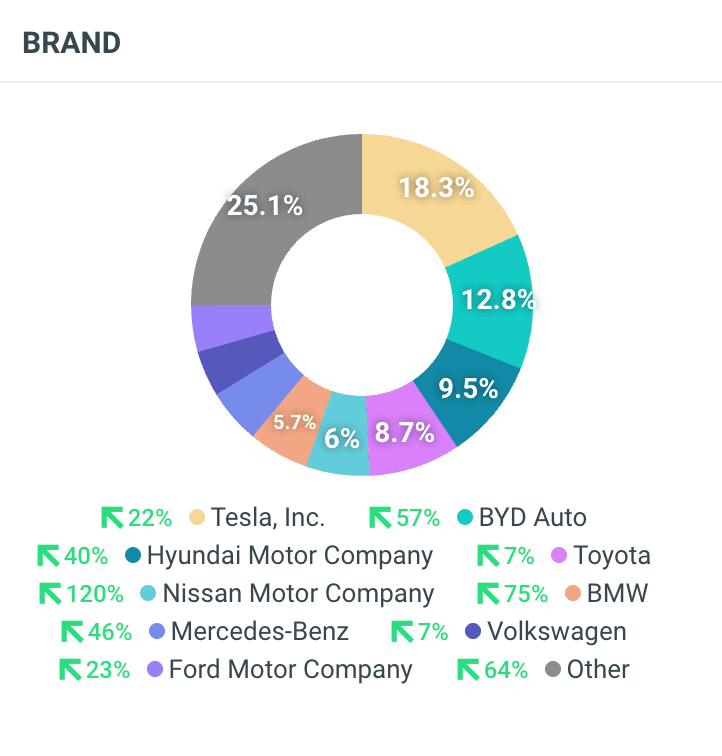

Brand SOV over time

This chart tracks share of voice over time for the brands in your dataset. It helps identify trends in visibility and reveals whether competitors are gaining or losing presence in AI mentions.

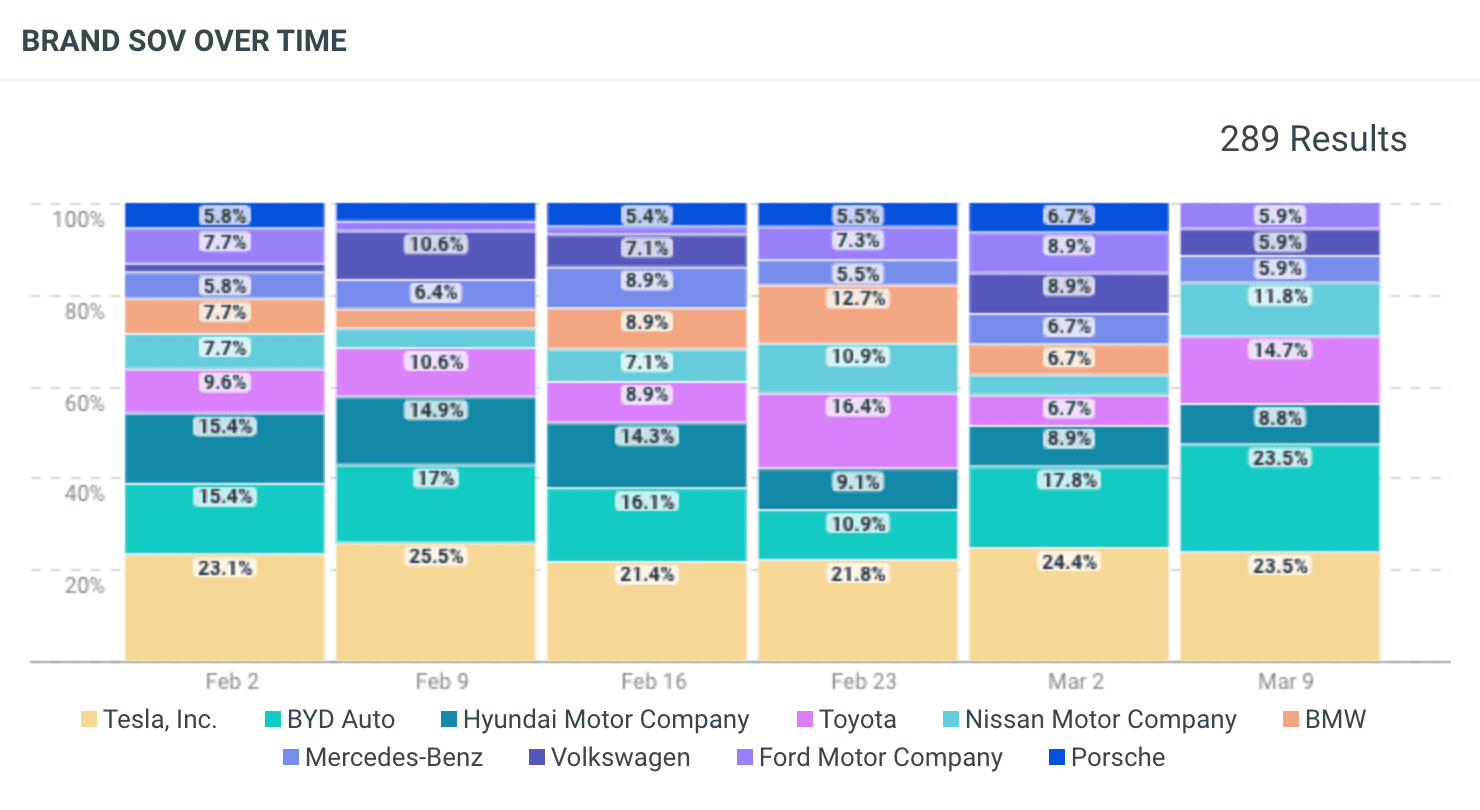

Brand SOV by LLM

The Brand SOV by LLM view breaks down brand share of voice by individual AI models, such as ChatGPT, Google AI, Perplexity, and Claude. Because each model may interpret information differently, this tile helps teams understand where their brand performs strongest across AI platforms.

Summary: The Talkwalker LLM Insights dashboard shows how often your brand appears in AI-generated answers, how competitors compare, and how brand visibility changes across different AI models over time.

The business case for tracking AI brand mentions strategically

Learning how to track brand mentions in AI search supports four core business priorities: visibility, risk management, internal alignment, and long-term brand perception.

Research from McKinsey shows that roughly half of consumers now use AI-powered search as part of their buying process, and nearly half say it has become their primary source of information.

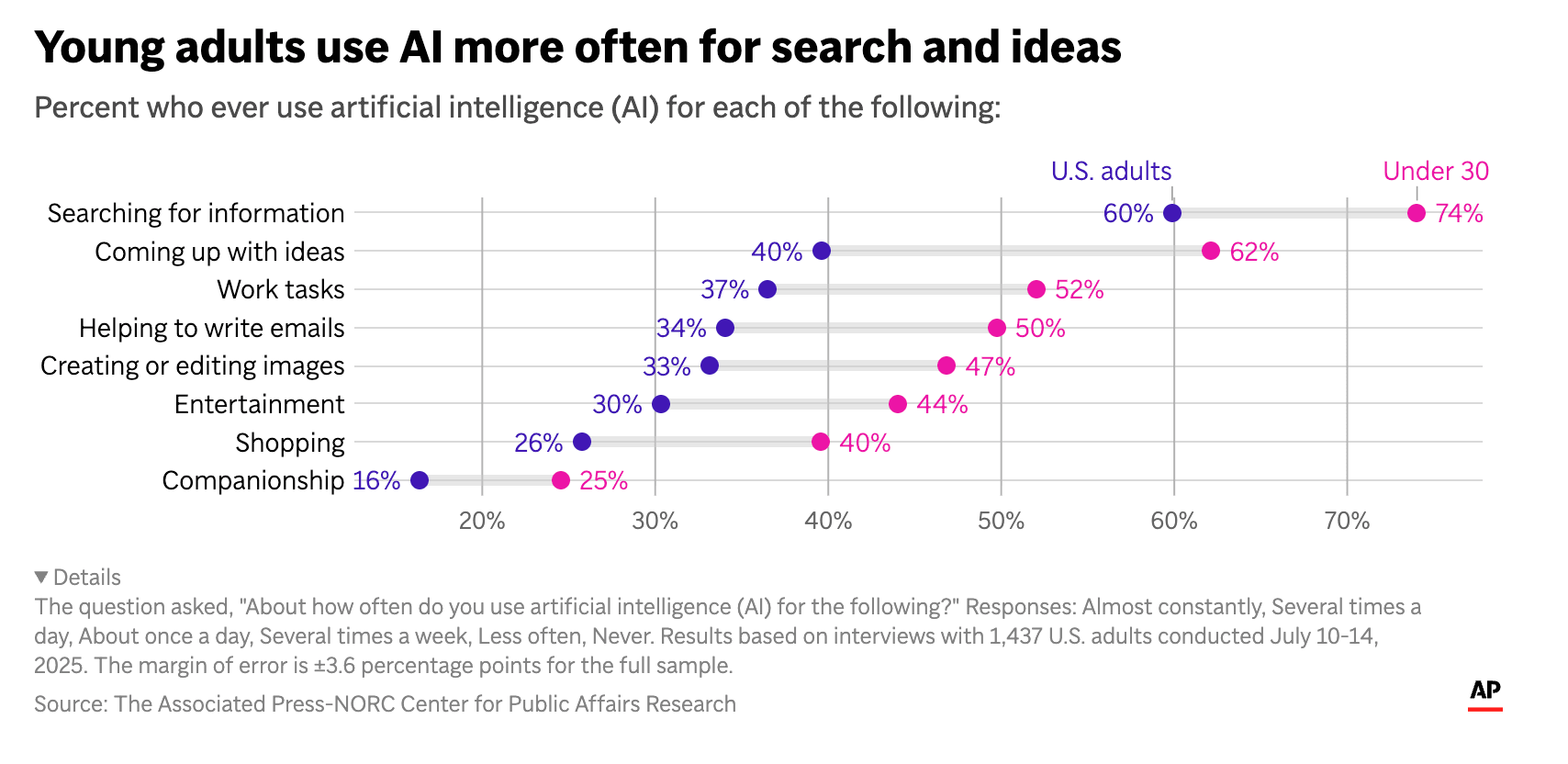

Separate polling from Associated Press-NORC Center for Public Affairs Research found that 60% of U.S. adults already use AI tools to search for information, with adoption even higher among younger audiences.

Source: Associated Press-NORC Center for Public Affairs Research

When AI search becomes a normal part of how people gather information, the way your brand appears in those answers becomes a business issue.

Here’s what that looks like in practice.

1. Reduce blind spots in AI-driven discovery

AI assistants are now part of how people research products. Buyers ask for comparisons, recommendations, and summaries before they ever land on your site.

If your brand doesn’t appear, or if it’s described inaccurately, you may never see that missed opportunity in your web analytics.

Tracking AI brand mentions helps you see:

Whether your brand appears in recommendation lists

How your strengths are described

Whether competitors are framed more favorably

How your category is being defined

2. Detect reputational and narrative risk earlier

AI answer engines repeat what they find online. If negative stories, old complaints, or incorrect claims start appearing more often, they can quickly show up in AI answers.

Tracking AI brand mentions helps you catch:

The same criticism appearing again and again

Changes in tone that make your brand sound less confident

Old information being presented as current

New competitors being positioned as stronger options

When you see these patterns early, you have time to fix the issue, update messaging, or respond publicly if needed.

Pro Tip: Social media crises can turn into LLM visibility crises if left unchecked. Follow our guide to recover your reputation on social and in search.

3. Improve cross-functional decision-making

What AI says about your brand affects more than marketing. It shapes how buyers think, how sales conversations start, and how leadership sees brand health.

Tracking AI brand mentions gives different teams the same view of what’s happening. It helps:

Marketing teams adjust messaging

PR spot repeating themes

Sales understand how prospects may already see the brand

Insights teams report on positioning changes

Executives understand risk and opportunity

When everyone sees the same data, decisions become clearer and faster.

4. Govern AI perception at scale

Checking a few prompts now and then is not enough. AI answers change over time, vary by platform, and shift as new information appears online. Without a consistent system, it’s difficult to know whether what you’re seeing is a one-off result or part of a larger trend.

Reactive vs strategic AI monitoring

Reactive approach | Strategic approach |

Manual prompt checks | Structured monitoring |

Screenshots in Slack | Repeatable dashboards |

One-off fixes | Long-term tracking |

Guesswork | Documented insights |

Strategic tracking creates a steady system. It allows you to:

Monitor how your brand is described across AI tools

See changes in tone or positioning over time

Compare your brand with competitors in a consistent way

Share structured updates with leadership

This turns AI brand monitoring into part of regular brand oversight, rather than something you check only when there’s a problem.

The emotional reality leaders aren’t talking about

Whether you’re prepared for it or not, AI tools are now part of how your brand is judged.

AI-generated summaries shape how prospects understand who you are, what you offer, and how you compare. Those summaries don’t stay in the chat. They get shared in Slack, referenced in sales calls, and sometimes land in front of the C-suite. Over time, the AI version of your brand can carry as much weight as your own messaging.

No leader wants to be surprised by a screenshot that tells a slightly different story than the one the company believes it’s telling.

The answer isn’t to resist AI, but to monitor it. When you can see how AI tools describe your brand — across platforms and over time — you regain control of the narrative.

Ready to try it for yourself? Try LLM Insights today.